For the last three years, the dominant narrative in artificial intelligence has been simple: bigger is better. Training frontier models required thousands of top-tier GPUs clustered in massive, localized data centers, restricting state-of-the-art AI to a handful of trillion-dollar tech giants.

A breakthrough from the Massachusetts Institute of Technology (MIT) may radically alter that trajectory. Researchers have published a new methodology utilizing advanced control theory to aggressively prune unnecessary complexity from neural networks during the training phase, cutting compute costs by up to 60% without sacrificing benchmark performance.

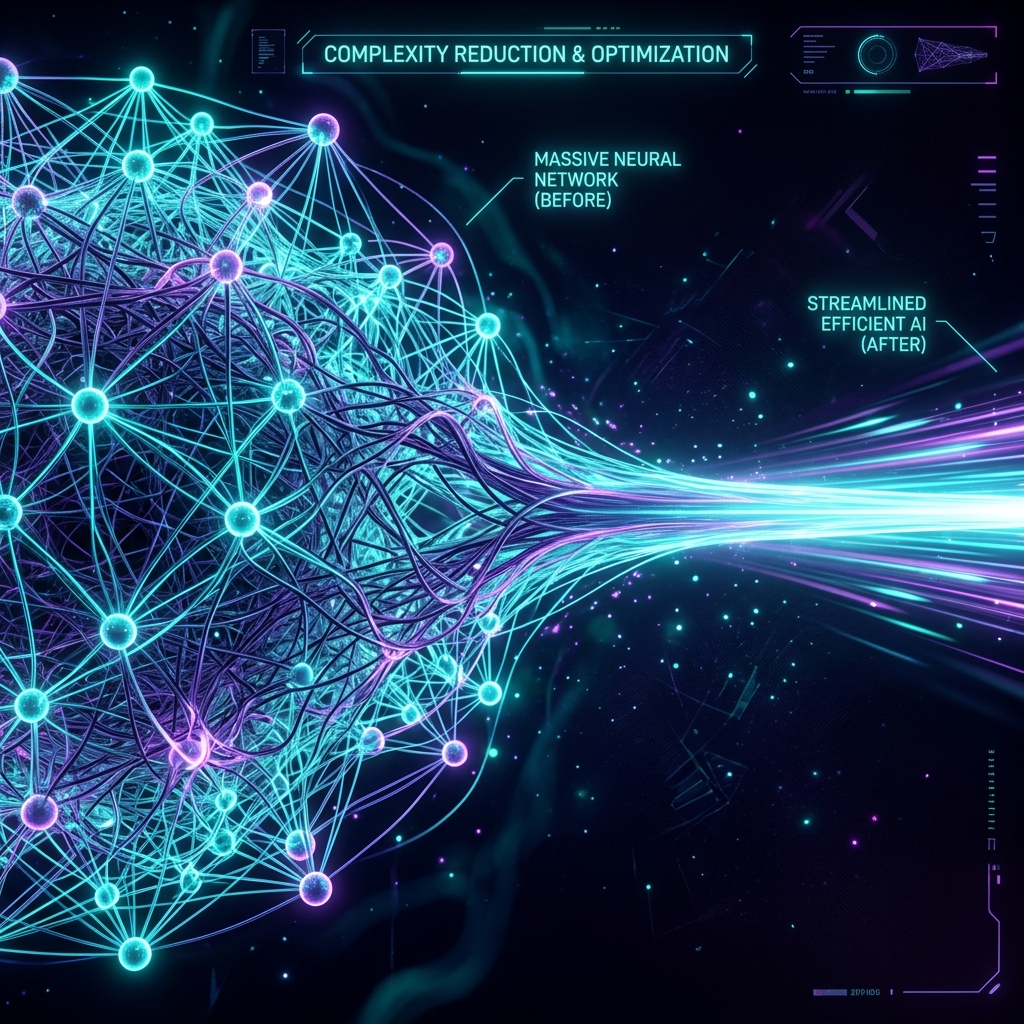

The Shift from Brute Force to Elegance

Currently, training a massive Language Model is an exercise in brute force. Models start with dense, chaotic layers of parameters, and the training process slowly beats them into alignment using mountains of electricity and data.

The MIT team approached the problem differently. By applying control theory—a mathematical framework typically used to manage engineering systems like aircraft flight paths or power grids—the system dynamically measures the “useful trajectory” of neural weights during training.

If a cluster of neurons isn’t actively contributing to predictive accuracy, the algorithm essentially surgically removes them in real-time. Instead of training a dense 500-billion parameter model and pruning it later, the model remains lean and hyper-efficient throughout its entire lifecycle.

Democratizing Frontier AI

The implications of this efficiency leap cannot be overstated. By drastically lowering the barrier to entry, smaller startups, university labs, and open-source consortiums could soon have the capability to train models on par with GPT-5 or Gemini Elite, using a fraction of the hardware.

“We’ve hit the physical wall of what data centers and national power grids can handle,” said the lead author of the MIT study. “To keep moving forward, we needed a fundamental math breakthrough, not just more chips. We believe control theory is the elegant solution AI has been missing.”

As hardware giants watch the development closely, software firms are already racing to implement the MIT technique into their standard training pipelines, signaling the end of the brute-force compute era.