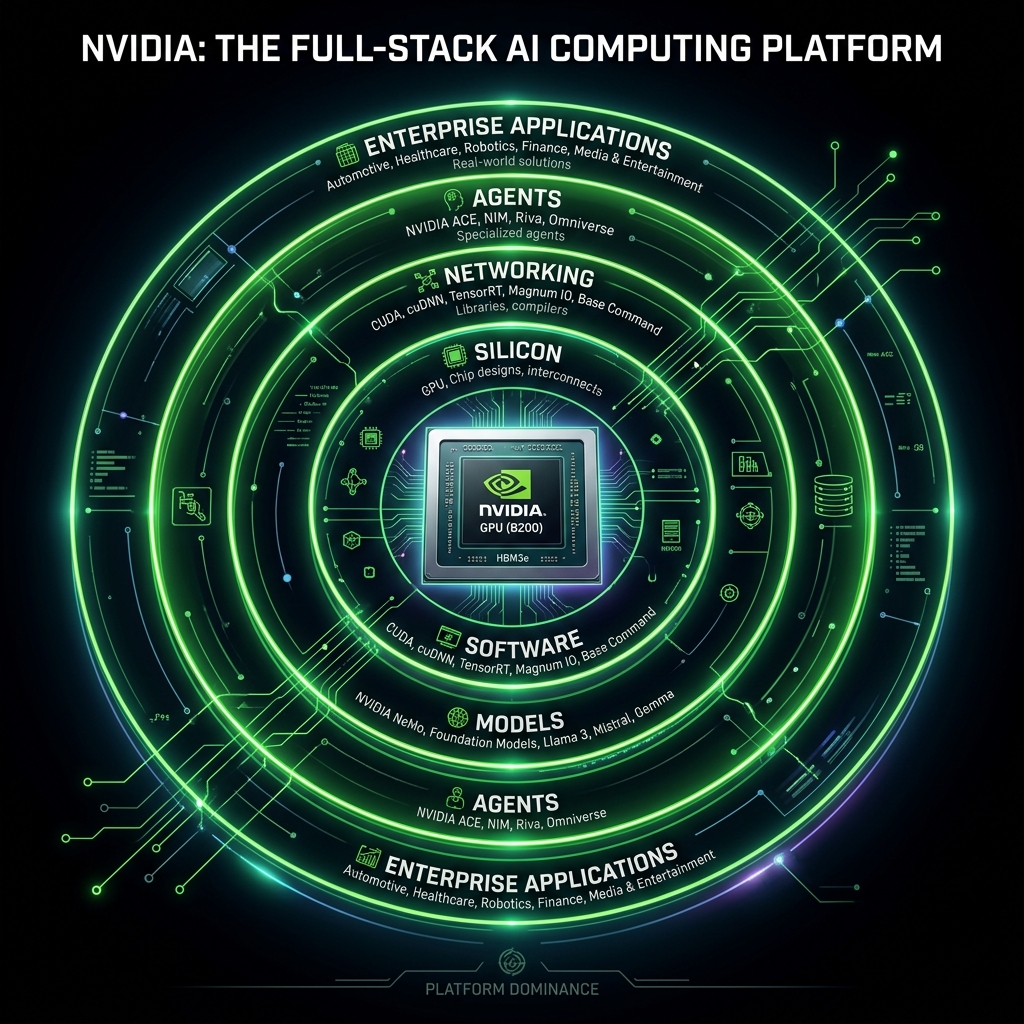

Nvidia is no longer just the company that makes the chips powering AI. It’s becoming the company that controls the entire enterprise AI pipeline — from silicon interconnects to deployment tools to autonomous agent frameworks. And that transformation is accelerating in 2026.

The Full-Stack Vision

Nvidia’s strategy extends far beyond selling GPUs. The company is building an integrated platform that spans every layer of the AI stack:

Silicon & Infrastructure

- Vera Rubin DSX AI Factory: Rack-scale reference architectures that combine GPUs, CPUs (Vera CPU), networking (Spectrum-X), and storage into standardized, repeatable systems

- NVLink: Proprietary high-bandwidth interconnects that create deep hardware-level integration

- Custom silicon roadmap: Annual hardware refresh cycles that keep enterprises locked into the Nvidia ecosystem

Software & Orchestration

- Run:ai acquisition: Centralized orchestration and governance for AI workloads across on-premises, cloud, and hybrid environments

- Nvidia AI Enterprise: A comprehensive software suite that acts as an “operating system” for enterprise AI

- Policy-driven resource management: Standardized tools for managing compute allocation, model deployment, and cost optimization

Models & Agents

- Nemotron: Nvidia’s own model family, positioning the company as both platform and model provider

- NemoClaw and OpenClaw: Frameworks for deploying autonomous agents within enterprise systems with safety and access controls

- Agent infrastructure: Standardized pipelines for agentic AI that integrate with Nvidia’s hardware and software stack

Industrial & Physical AI

- Omniverse: Digital twin platform for industrial simulation and physical AI development

- OSMO: “Prompt-driven” physical AI development platform managing pipelines from data ingestion to deployment

- Siemens and industrial partnerships: Deep integration into manufacturing, robotics, and industrial automation workflows

The Platform Playbook

Nvidia’s strategy mirrors historical platform consolidation plays:

- AWS model: Start with infrastructure, expand to services, become the default platform for building everything

- Microsoft model: Own the operating layer that enterprises depend on, creating switching costs that compound over time

- Lock-in architecture: By standardizing the entire AI data path — from storage to GPU to model to agent — Nvidia creates deep architectural dependencies that are extremely difficult to unwind

The Data Plane Control

One of the most underappreciated aspects of Nvidia’s strategy is its push to control the AI data plane:

- Nvidia AI Data Platform: Standardized data architecture for AI workloads

- STX storage integration: Reference designs that integrate directly into the storage stack, giving Nvidia architectural control over how data flows to GPUs

- Prescriptive architectures: Rather than adapting to existing enterprise infrastructure, Nvidia is increasingly dictating how that infrastructure should be designed

Competitive Implications

Nvidia’s platform ambitions create tensions across the industry:

- Cloud providers: AWS, Azure, and Google Cloud are simultaneously customers and potential competitors

- Model companies: OpenAI, Anthropic, and Google are building on Nvidia’s hardware while Nvidia builds its own models

- Enterprise software: Traditional enterprise vendors face the prospect of Nvidia controlling the underlying platform their products run on

- AMD and Intel: Hardware competitors face not just chip-level competition but ecosystem-level lock-in that makes switching costs prohibitive

Why It Matters

Nvidia’s transformation from chip maker to platform owner is perhaps the most consequential strategic shift in the AI industry. If successful, the company won’t just profit from the AI boom — it will control the infrastructure layer upon which the entire enterprise AI economy operates.

The question is whether the AI industry will allow a single company to control the full stack, or whether competitive pressures and open-source alternatives will prevent the kind of platform monopoly Nvidia is building toward.

Source: moneymorning.com, nvidia.com, constellationr.com, forbes.com